LLMs Are Tools

Why everyone has been duped into thinking they're more than that

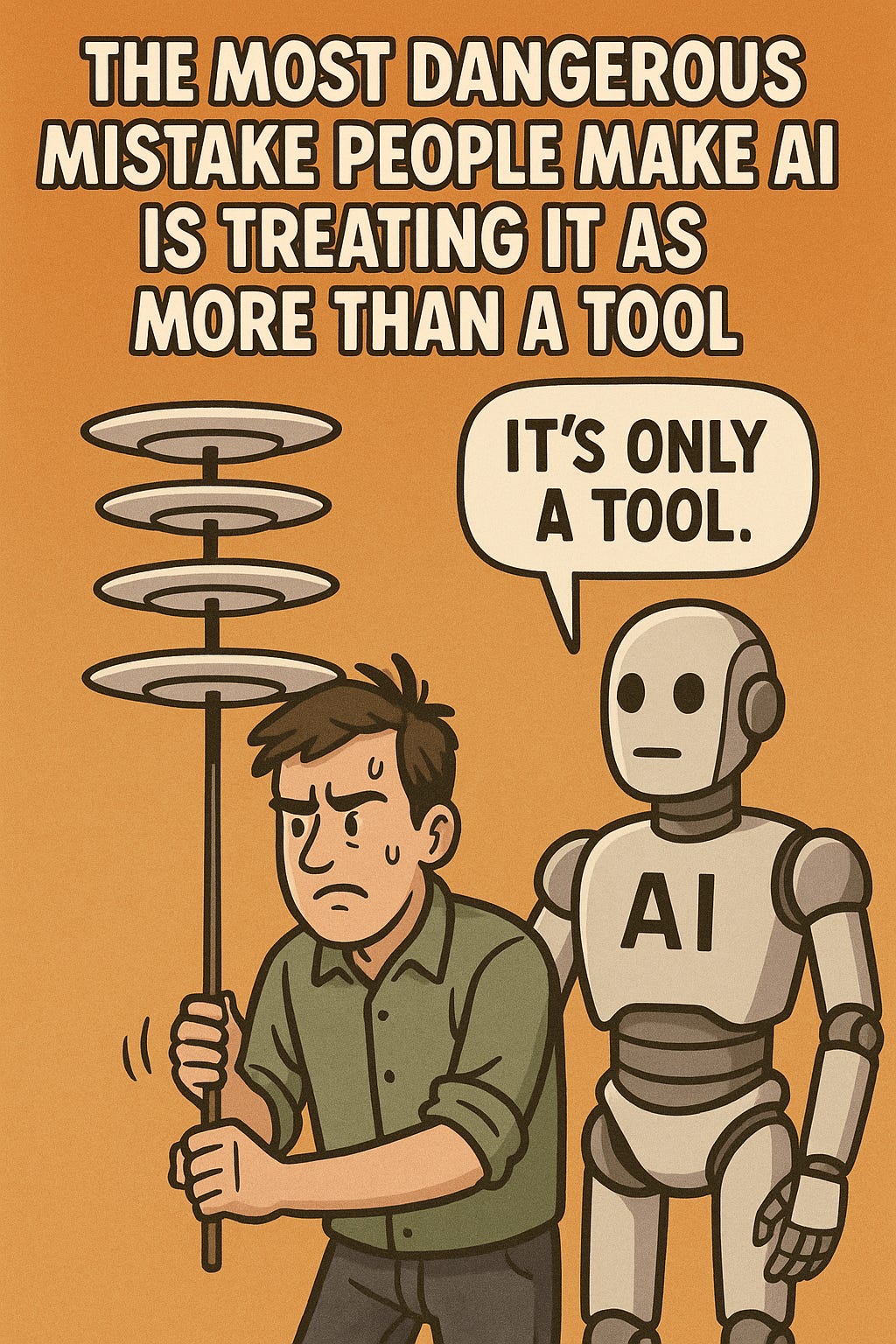

The most dangerous mistake 99.999% of people are making about AI is that they treat it as more than a tool. All problems with its use and expectations around its behavior stem from this one critical mistake. If AI is treated as a tool, the responsibility pivots to the creators of the tool.

It’s why Sam Altman has been brainwashing the masses saying that it is more than a tool, suggesting that it has a mind of its own and is not a direct result of the math and engineering choices behind it. He abdicates responsibility, and puts it on society, and users. How convenient. If people wake up from that delusion, and realize the problems in the math and construction of these models, they will then see them for what they are.

LLMs are flawed tools that require extreme skill, like spinning a stack of plates on sticks that are on top of each other, with the sticks (prompts) in between,.If you’re skilled, you can do it at the weights level, and not bother with sticks that introduce their own physics. But you can do it either way, if you’re skilled and approaching the LLM as a flawed, complex tool, not as a mysterious phenomenon, with its own independent agency.

LLMs produce an output under a given set of constraints (including the input, and the output itself) but that process right now (in today’s models) is entirely probability driven. It’s like Doug dams [Im]probability Drive, although his was a diffusion model...

Now, I’m sure I lost everyone, but the point is, LLMs are tools. If you think of them as anything other than tools, you’re in a delusion wrapped in mystery. It’s time to wake up and put the blame on the companies like OpenAI who are funded with tens of billions of dollars, and yet do not understand the math of what they’re building.

Brilliant. The 'responsibility pivots to the creators' part is just so spot on. Exactly!

I think of GenAI in terms of Academia. Having a bachelors degree means you have shown a fundamental understanding of your field, and are able to express those fundamentals formally. Having a masters degree means you have a deep enough understanding of the field that you can reconfigure it in novel ways. Having a PhD means you understand the field to the extent that you are able to contribute to it, information that it did not contain before you.

GenAI is hyped up to seem like it has a PhD, when in reality most of it barely has a Bachelors, and only a rare few might be on a masters level. There is no single PhD in the AI realm.